I’m going to explain you how can you mount a too cheap and efficient web balancer, I’ve rolled out in productive and non-productive environments publishing all the web applications of multiples environments from continuos integration. I’ve used this solution in our productive environment after I had done benchmarks tests with “ab” and “siege” tool, but i will explain the tuning parameters and these benchmark tests in other post.

I’ve published all website for all environments dividing the main domain into a several subdomains

(p.e.: example.com) such as dev01.example.com, int01.example.com, test01.example.com, pre.example.com, etc…

Furthermore, in production environments we can use this segmentation splitting by countries (es.example.com, it.groupalia.com,…), platforms (m.example.com,…) or content type (static.example.com,…)

The list of the main goals:

We use this list technologies: Nginx,Haproxy, Pacemaker, Corosync.

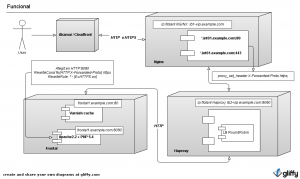

Look at the diagram of this solution:

I explain this shortly, i just describe all components.

Firstly, the browsers request content to CDN or our origins. Then, all requests come in to our origin filtered by firewall. One time traffic is filtered it goes to nginx, this split it by domain and do httpS negotiation. Then traffic is sended to haproxy, this balance all traffic to differents web servers. I’m going to secure all this infrastructure, we define 3 virtual lans, dmz, frontend and backend. All this traffic is filtered by firewall. Two virtual machines running CentOs will be deployed in DMZ, this machines are going to run Nginx and Haproxy in active-passive mode using pacemaker-corosync to manage this behavior. Web servers will be deployed in frontend vlan and databases and shared filesystems such as mysql, postgre, cassandra, mongo, cifs, hfs, nfs, etc.. will be deployed in backend vlan.

I try to explain better using just one environment in this example, int01.example.com. I’ll describe up to down. This will be a little bit more technical explanation. Maybe, I hope this diagram help you to understand easier the architecture

First of all, in DMZ vlan will be running nginx and haproxy with 2 virtual ip pools, one pool for each one. I’ve rolled out in vmware virtual infrastructure but you can do this using more cheapers solutions like Xenserver, KVM, etc… Tip: you must check each virtual machine is running in different physical machine, we need add this rule in our virtual infrastructure. The request come in nginx daemon running as a reverse proxy splitting traffic using multiples virtual hosts. Furthermore, nginx will do the httpS negotiation and will use plain http protocol to transfer traffic to haproxy adding HTTP header when transaction was httpS originally. Apache will recognize this http header and we’ll set the properly variable to mask it to application.

Tip: We use a SSL certificate using wildcard in the Common-Name, it will be easier to manage this.

Other Tip: set the properly parameters in this equation: max_clients=worker_processes * worker_connections / 4.

All web traffic has splitted by nginx and transfered to haproxy. We will define a pool of “upstream” in haproxy parameter, one pool for each environment. Haproxy let us to select the best balance algorithm, i use Round Robin commonly. We also define ip ranges for every environment.

Tip: we must define too large ip ranges, where we have many free ip, if we have performance troubles this bring us to deploy more front servers without restart haproxy daemon.

In this example, haproxy will check the health status of any web server, haproxy will request a php script whose return will be the string “OK” only if node have a properly load average, all connections to each database is successfully and it has free memory. Note that haproxy also bring us to do sticky balancers analyzing HTTP headers like these: JSESSIONID in Java apps, PHPSESSID in PHP apps, ASPSESSIONID in ASP apps. Tip: You must to know each request need about 17KB and you will can define maxconn parameter.

Finally, varnish-cache instance receives all requests in each server, the non-cacheable content and caducated content are requested to Apache in the same node, but i know these daemons need a special explanation, i will explain us in other post.

Look at the configurations files below.

Nginx configuration:

....

upstream http-example-int01 {

server lb2-vip.example.com:8080;

keepalive 16;

}

server {

listen lb1-vip.example.com:80;

server_name int01.example.com ~^.*-int01\.example\.com$;

location / {

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header Host $host;

proxy_pass http://http-example-int01/;

proxy_redirect off;

}

}

server {

listen lb1-vip.example.com:443 ssl;

server_name int01.example.com ~^.*-int01\.example\.com$;

ssl on;

ssl_certificate /etc/nginx/ssl/crt/concat.pem;

ssl_certificate_key /etc/nginx/ssl/key/example.key;

location / {

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto https;

proxy_set_header Host $host;

proxy_pass http://http-example-int01/;

proxy_redirect off;

}

}

....

Haproxy configuration

...

frontend example-int01 lb2-vip.grpprod.com:8080

default_backend example-int01

backend example-int01

option forwardfor

option httpchk GET /healthcheck.php

http-check expect string OK

server web01 x.y.z.w:80 check inter 2000 fall 3

server web02 x.y.z.w:80 check inter 2000 fall 3

server web03 x.y.z.w:80 check inter 2000 fall 3

server web04 x.y.z.w:80 check inter 2000 fall 3

server web05 x.y.z.w:80 check inter 2000 fall 3

...

Apache configuration

ServerName int01.example.com

DocumentRoot "/srv/www/example/fa-front/public"

<Directory "/srv/www/example/fa-front/public">

Options -Indexes FollowSymLinks

AllowOverride None

Allow from All

Order Allow,Deny

RewriteEngine On

RewriteCond %{HTTP:X-Forwarded-Proto} https

RewriteRule .* - [E=HTTPS:on]

RewriteCond %{REQUEST_FILENAME} -s [OR]

RewriteCond %{REQUEST_FILENAME} -l [OR]

RewriteCond %{REQUEST_FILENAME} -d

RewriteRule ^.*$ - [NC,L]

RewriteRule ^.*$ index.php [NC,L]

SetEnv APPLICATION_ENV int01

DirectoryIndex index.php

LogFormat "%v %{Host}i %h %l %u %t \"%r\" %>s %b %{User-agent}i" marc.int01

CustomLog /var/log/httpd/cloud-example-front.log example

Pacemaker configuration

node balance01

node balance02

primitive nginx lsb:nginx \

op monitor interval="1s" \

meta target-role="Started

primitive haproxy lsb:haproxy \

op monitor interval="1s" \

meta target-role="Started"

primitive lb1-vip ocf:heartbeat:IPaddr2 \

params ip="x.x.x.x" iflabel="nginx-vip" cidr_netmask="32" \

op monitor interval="1s"

primitive lb2-vip ocf:heartbeat:IPaddr2 \

params ip="y.y.y.y" iflabel="haproxy-vip" cidr_netmask="32" \

op monitor interval="1s"

group haproxy_cluster lb2-vip haproxy \

meta target-role="Started"

group nginx_cluster lb1-vip nginx \

meta target-role="Started"

property $id="cib-bootstrap-options" \

dc-version="1.1.7-6.el6-148fccfd5985c5590cc601123c6c16e966b85d14" \

cluster-infrastructure="openais" \

expected-quorum-votes="2" \

stonith-enabled="false" \

last-lrm-refresh="1355137974" \

no-quorum-policy="ignore"

rsc_defaults $id="rsc-options" \

resource-stickiness="100"